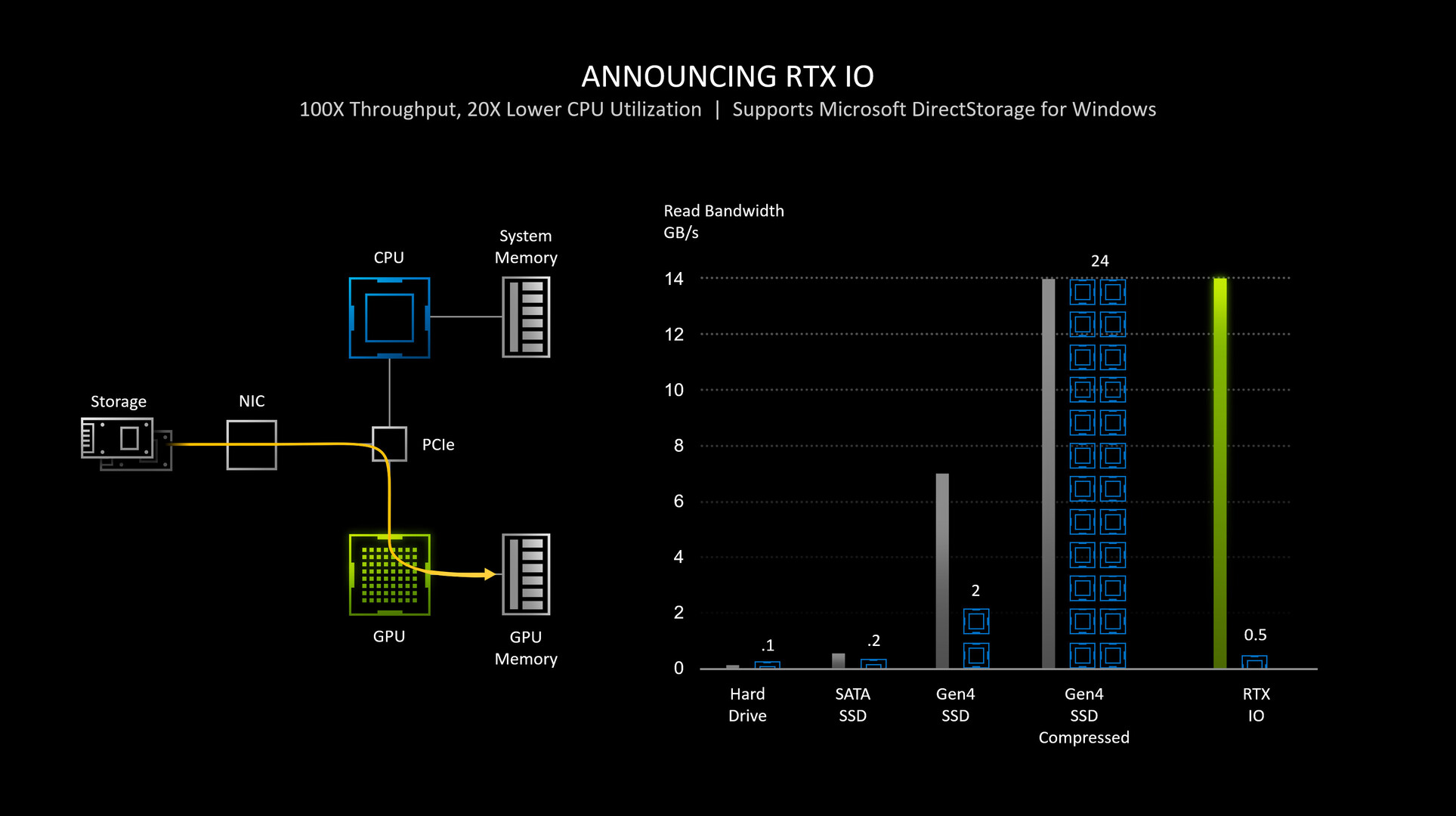

NVIDIA RTX IO Detailed: GPU-assisted Storage Stack Here to Stay Until CPU Core-counts Rise | TechPowerUp

DeepSpeed: Accelerating large-scale model inference and training via system optimizations and compression - Microsoft Research

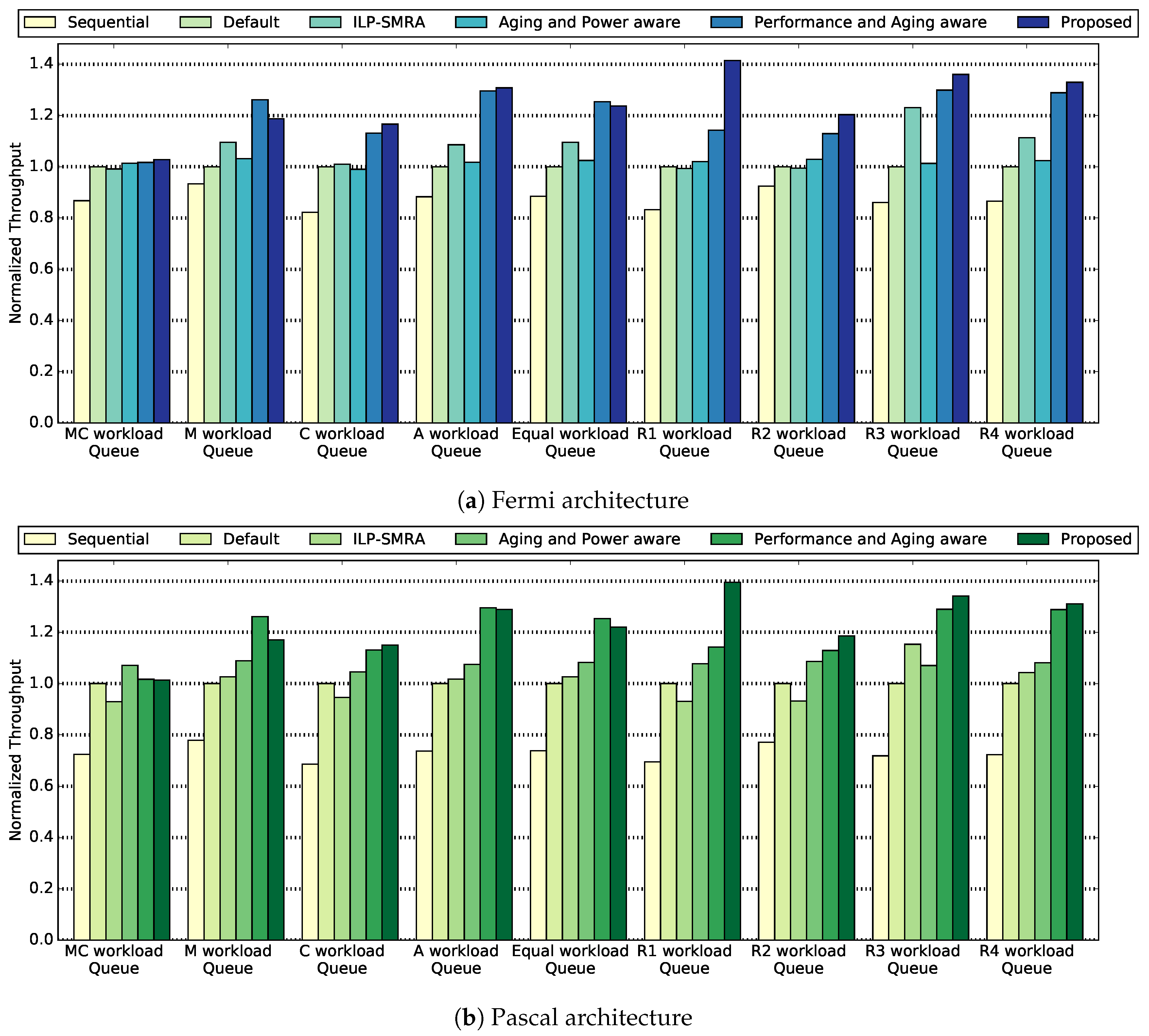

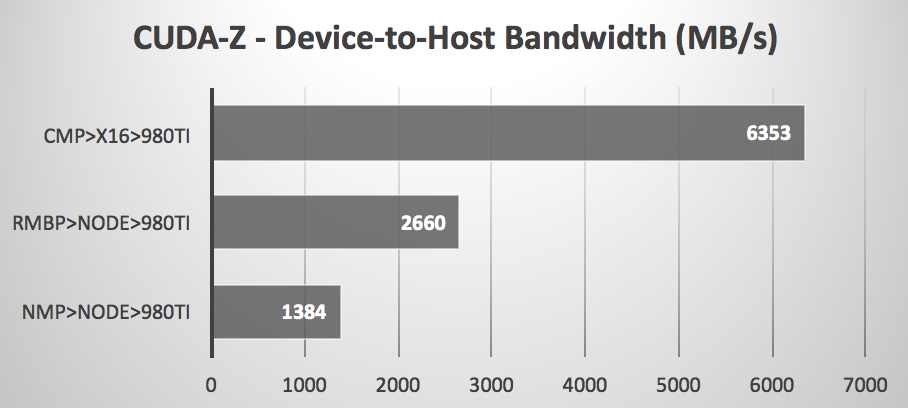

Accelerating and Maximizing the Performance of Telco Workloads Using Virtualized GPUs in VMware vSphere - VROOM! Performance Blog

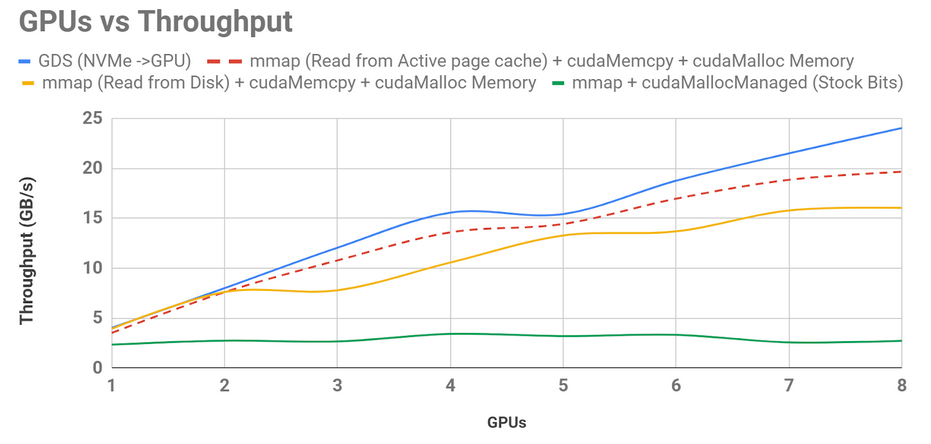

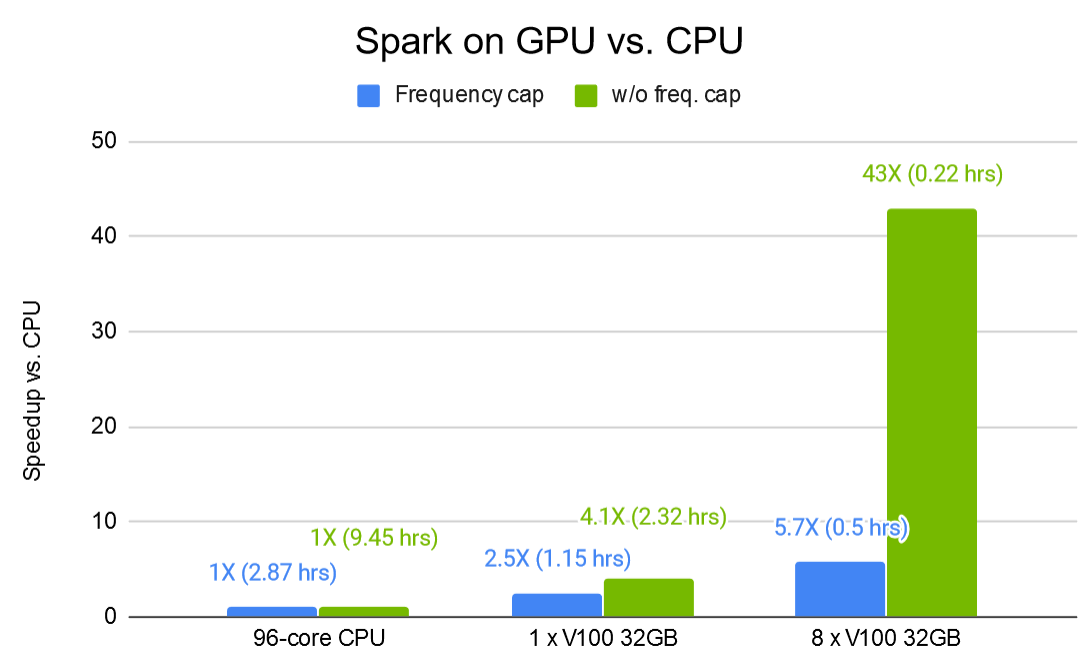

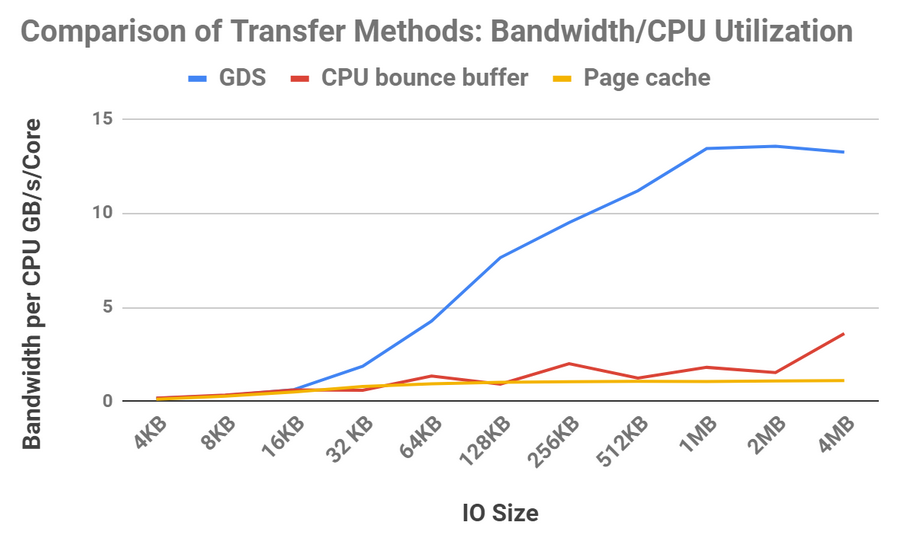

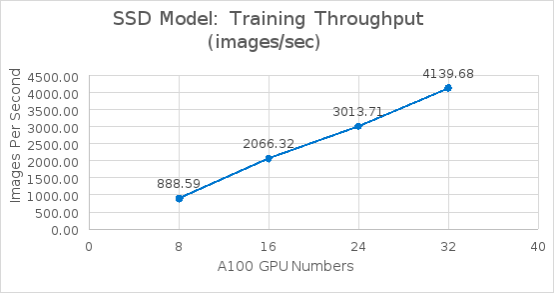

Test results and performance analysis | PowerScale Deep Learning Infrastructure with NVIDIA DGX A100 Systems for Autonomous Driving | Dell Technologies Info Hub

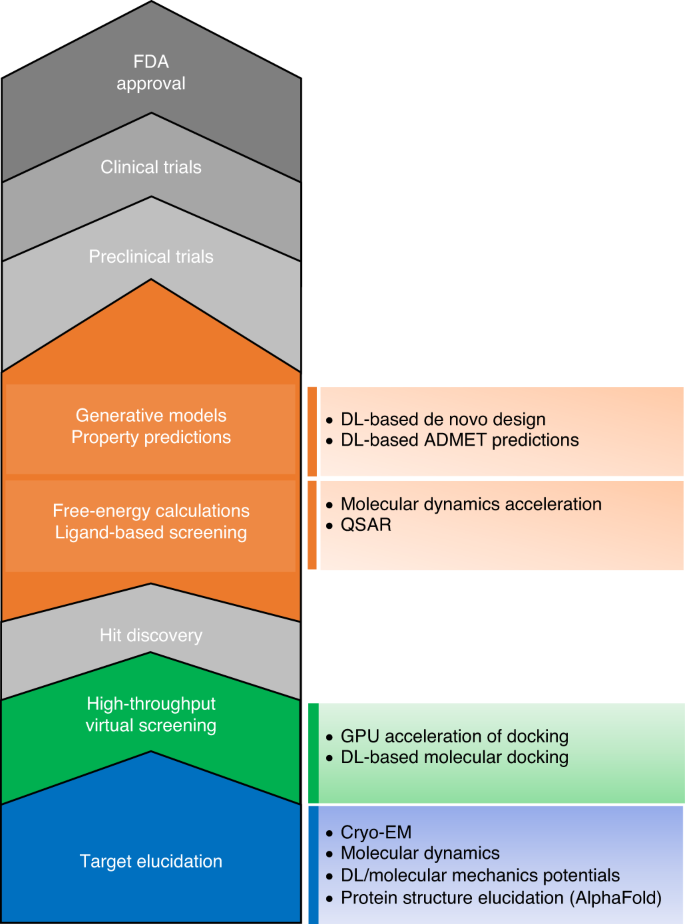

The transformational role of GPU computing and deep learning in drug discovery | Nature Machine Intelligence

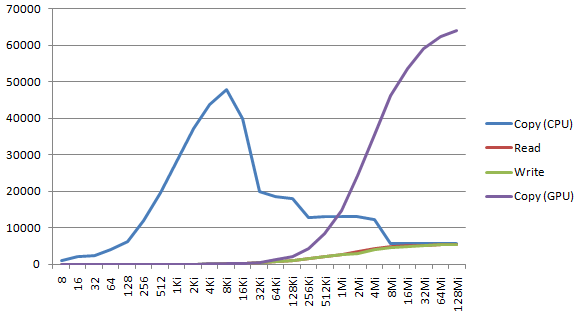

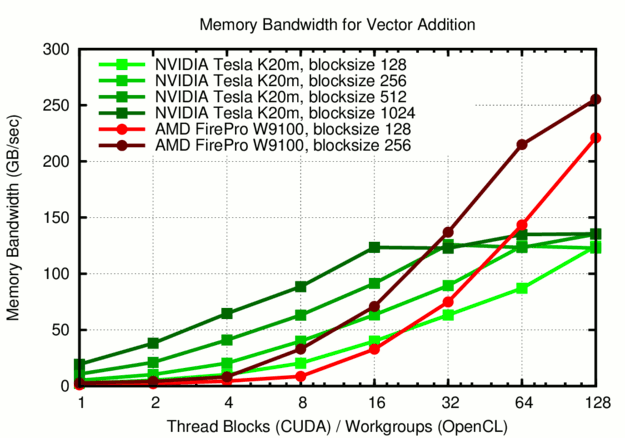

GPUs greatly outperform CPUs in both arithmetic throughput and memory... | Download Scientific Diagram